Meta’s censorship policies have long been a source of controversy, particularly when it comes to regulating content that is perceived as sexually explicit. According to the social media platform’s terms for Adult Nudity and Sexual Activity, Meta does not typically censor images of female breasts that include the nipple, such as those that may be posted in the context of protest, breastfeeding or post-mastectomy scarring. However, these policies have been criticized for being overly simplistic in terms of distinguishing between male- and female-identifying bodies, which can lead to inconsistencies and confusion when it comes to enforcing them—leaving a lot of up to Meta’s own algorithm, and does not consider the nuances of those who fall outside of the traditional binary options including intersex, non-binary and trans people who engage daily on the platform.

Meta has turned to their Oversight Board to provide new rules, seek clear guidance on how they can change and adapt their policies to address concerns and provide greater clarity for users who believe their policies may take a binary approach to gender. Although some critics have questioned whether these changes will be enough, they represent an important step toward promoting greater inclusivity and respect for diverse gender identities on the platform.

For over a decade, social media platforms such as Instagram and Facebook have banned all images of nipples for fear that they would unleash torrents of pornographic imagery. However, many academics and activists have argued that how moderation is organized and enacted at Meta and what policies and techniques are used in content moderation need to be clarified or enforced by Meta’s moderators as rules apply to some but not others. This is why over the course of 2019, Facebook consulted over 2,000 people worldwide to help establish the initial group of board members for Meta’s Oversight Board—to help them answer and resolve complex questions about freedom of expression online. The Oversight Board can choose to hear content cases from either Meta directly or people on Facebook or Instagram who disagree with Meta’s content decisions.

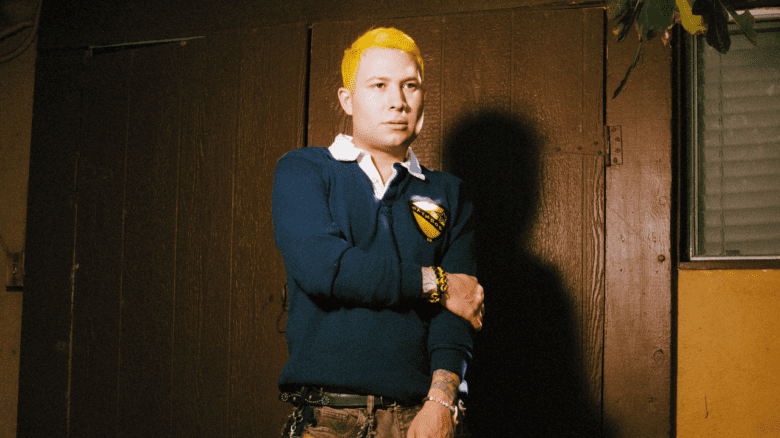

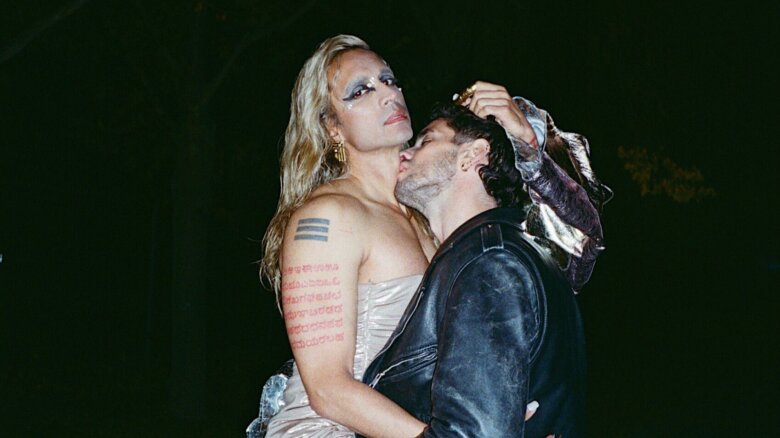

The Meta Oversight Board recently reviewed (as a bundle) and overturned the content moderation team’s decisions regarding two Instagram posts from 2021 and 2022. Both Instagram posts featured a trans and non-binary couple who announced a fundraiser for top surgery and included a link in their bio. Despite the absence of any visible nipples in either image, the posts were flagged for violating Instagram’s sexual solicitation community standard.

Christopher J. Persaud, a PhD student at the USC Annenberg School for Communication and Journalism researching how media and communication technologies entangle queer identity, says, “Users can read one policy online that tells them what is allowed on their platforms. But internally, content moderators have this entirely different set of guidelines not aligned with the external-facing policies.” It’s no wonder that many within the LGBTQ2S+ community have felt their rights violated, specifically trans and non-binary individuals.

Since the platform’s inception, activism has been taking place on Instagram, from “lactivists”—those who advocate for breastfeeding—to the #FreeTheNipple campaign—started by filmmaker Lina Esco in 2012 that exposed the double standards women face in public and on social media for appearing topless. The campaign went viral in 2013, after Facebook removed clips of Esco’s documentary (of the same name) from their website for violating its guidelines. In turn, celebrities such as Miley Cyrus, Lena Dunham and Rihanna posted topless photos in support.

Although Meta’s community standards are designed to protect the human rights of all users while ensuring a safe environment for everyone—its algorithm-based content-moderation system has been questionable. Any guideline on understanding gender expression nuances must first clarify and enforce the platform’s moderation policies regarding discrimination, as Meta’s moderators still have unclear or inadequate standards.

Case in point: Los Angeles-based artist, activist and associate professor at Chapman University Micol Hebron created digital pasties of male nipples—permitted on Instagram—so that users with nipples that identified as “female” could superimpose them over their own to mock the disparity. Hebron notes that since they have been making their nipple image art, individuals can “resize, cut and paste” onto their images as they see fit. Hebron designed their digital pasty to “cover over unacceptable female nipples” being censored on Meta’s platforms hoping that this nipple could help other individuals who were being blocked or censored for expressing their identities. Since they started making this nipple art as a sarcastic response to Meta’s policies in 2014—and later had a bit of a viral moment in 2015—they have often wondered what or how exactly Meta’s algorithms are determining the gender of a nipple.

“Does it mean it has more fat, hair or a bigger nipple? Maybe a different areola? Where are we drawing the line at?” The content standards, although having undergone minor modifications, still allow for unjustifiable violations, leaving room for improvement. “What indicates what is and isn’t a nipple? We need to be a little bit more specific,” Hebron laughs.

As a decision was made and released by Meta’s Oversight Board in January 2023 relating to the trans and non-binary couple who announced their images had been pulled for posting their pictures and top surgery fundraisers on Instagram—the company released a statement: The restrictions and exceptions to the rules on female nipples are extensive and confusing, particularly as they apply to transgender and non-binary people.” They also made recommendations to provide more detail in its public-facing Sexual Solicitation Community Standard and to revise its guidance for moderators on the Sexual Solicitation Community Standard so that it more accurately reflects the public rules on the policy, so perhaps it would reduce content-enforcement errors. While these recommendations are long overdue, the Oversight Board felt it was an essential next step to have Meta’s overall content standards become more inclusive and respectful of human rights and to help to create an inclusive environment where everyone is respected and treated fairly, regardless of their gender identity on the platform. Although there has yet to be a response on Meta’s part to date, they have 60 days to respond publicly to the board’s recommendations.

Hebron is skeptical, though. They believe that while these recommendations are made with good intent, the exceptions often need to be clarified and better defined, sharing that we can’t expect content moderators to assess the extent and nature of visible scarring to determine whether certain exceptions apply. “The lack of clarity inherent in this policy creates uncertainty for users and reviewers and makes it unworkable in practice,” they say.

Oddly enough, Persaud doesn’t disagree. He explains that all this demonstrates so far is “that there needs to be a broader structural change in line with human rights standards, internal and public-facing content-moderation policies.” This means creating more explicit guidelines for moderators and creators, developing better algorithms to detect violations of these guidelines and engaging with those within the LGBTQ2S+ community to listen to ensure their voices are heard in future platform decisions. “We need more of a connection between the platform terms we are used to being moderated against and the guidelines the content moderators follow,” Persaud adds.

Ada Fogh is a trans artist from Copenhagen, Denmark, whose entire medium is AI and algorithmic content. Fogh’s latest project is called IN TRANSITU, where each Thursday on Instagram, they upload an image update from their gender transition. The project is one part exploration and one part challenge to the Instagram moderation protocols and to see, as Fogh explains in their artist statement, when “the largest image-based social media in the world sees me as a woman. However, it will also be a stark reminder that I have now lost my male privilege.”

Fogh credits the inspiration for this project to Victoria-based trans activist Courtney Demone, who was behind the #DoIHaveBoobsNow campaign, where Demone posted topless photos of her transition on social media while undergoing hormone replacement therapy to demonstrate some of the social media censorship that took place on marginalized bodies. “Meta is not obliged to take action on the recommendations they have provided,” Fogh explains, noting that while the Oversight Board does help Meta to resolve challenging issues, they still have their investors’ best interests in mind.

Fogh explains that a large part of IN TRANSITU showcases how much AIs and algorithms can fail to function, especially in the online trans and non-binary community. She believes if Meta decides to take the Oversight Board’s recommendations, the changes we may see implemented will be small. “I think they will try to maintain this image as an LGBTQ+ friendly platform, but can’t execute it on a broad-term basis. Because that is where the money comes in and affects their bottom line.”

“The people that run the social media websites like Facebook don’t care about queer people. They don’t really care that it makes life more difficult for artists. This boils down to just that they don’t want sex workers on their platform. They don’t want anything having to do with porn”

In recent years, Meta has retooled their algorithm to control what ads appear in social media feeds to verify to brands that their ads are not running beside “unsafe” content. Genevieve Kuzak is a transmasculine photographer, videographer and media editor based in New York whose work explores elements of pornography, mental illness and sexuality. Kuzak also remains suspicious that Meta’s guidelines will provide more leeway to queer people, especially when posting what could be considered erotic content. “The people that run the social media websites like Facebook don’t care about queer people. They don’t really care that it makes life more difficult for artists. This boils down to just that they don’t want sex workers on their platform. They don’t want anything having to do with porn,” they explain.

Kuzak may be on to something here. In the spring of 2018, U.S. Congress passed the Stop Enabling Sex Traffickers Act (SESTA) and the Fight Online Sex Trafficking Act (FOSTA). A brief from the Transgender Law Center describes how FOSTA’s breadth has directly harmed lesbian, gay, trans and queer people, explaining in their brief how FOSTA’s regulation of speech furthers the profiling and policing of LGBTQ2S+ people, particularly trans and gender nonconforming people. It argues that the statute’s censorial effect has resulted in the removal of dialogue created by LGBTQ2S+ people and discussions of sexuality and gender identity.

Kuzak explains, “You can blur nipples pretty easily. I always do that. But the problem is that even if you blur nipples, they will still take it down for sexual solicitation. Now you’re just, like, censoring art and making it harder for trans people to exist. Because, you know, art has to do with sex work.” This begs the question: what, if anything, will change about Meta’s policies, realistically? Online providers, including social media sites, can now be held liable for posts perceived to be advertising sex on their sites (under SESTA and FOSTA), and state law enforcement can prosecute these cases at their own discretion.

Although Meta’s Oversight Board has clearly stated that changes need to be made to have clear criteria that respect international human rights standards of all people on the platform, including intersex, trans and non-binary people. It’s clear that the censorship resulting from FOSTA has resulted in real and substantial harm to LGBTQ2S+ people’s First Amendment rights. As well as economic damage to LGBTQ2S+ people and communities and, even more so, has made queer individuals distrustful of the platform altogether.

Some prime examples of this include when non-binary model Jude Guaitamacchi had their image taken down for violating community guidelines on Instagram in a photo where they proudly faced the camera wearing boots and a leather jacket with their hands over their crotch. The inspiration for the image was Adam Levine, singer from Maroon 5. Guaitamacchi complained about the double standard that Instagram gave to celebrities in an interview, saying these policies felt like trans erasure. Typically, individuals who aren’t outright removed from the platform may be shadow banned, aka, when an account is placed in invisible mode and strategically hidden from users’ view. According to HubSpot, without informing the user that it’s happening, one’s content will become restricted and their engagement on the platform will start to become limited, as it prevents an individual’s content from appearing on searchable hashtags or removes it entirely from the Explore page.

Persaud isn’t surprised. “When we look at algorithms and analyze what sort of images get to stay on the platform versus what images don’t get to stay on the platform—a lot of it to appease advertisers and to ensure that the people who line their pockets are happy and appeased.”

Many social media platforms rely on advertising revenue to exist. Therefore it becomes an interesting predicament: platforms can either appease advertisers or provide diverse expression from people and communities that might otherwise be overlooked or marginalized. The Oversight Board’s most recent ruling reveals that it’s not just LGBTQ2S+ people and communities being discriminated against. Instead, Meta’s entire thought process and community standards need to be reconsidered. The impact on marginalized and vulnerable communities has already been made, it’s just a matter of whether Meta can truly fix it.

Why you can trust Xtra

Why you can trust Xtra